#60509 closed defect (fixed)

Upgrading ports sometimes breaks internet connection

| Reported by: | iefdev (Eric F) | Owned by: | ryandesign (Ryan Carsten Schmidt) |

|---|---|---|---|

| Priority: | Normal | Milestone: | |

| Component: | base | Version: | 2.6.2 |

| Keywords: | Cc: | ryandesign (Ryan Carsten Schmidt), jmroot (Joshua Root), breun (Nils Breunese) | |

| Port: |

Description (last modified by iEFdev)

Ok, I'm not really sure how to describe this, but…

Sometimes, when upgrading some ports, it hangs, and then just sits there. Resulting in that all internet is gone on the computer. It has happerened a 3-4 times over maybe past 2 years. A restart of the computer helps it. Now last time (the other day) I also changed my DNS to 1.1.1.1 instead of using the dnscrypt-proxy - which uses the same. Don't know if that is related, and where/what to start looking for the next time it happens.

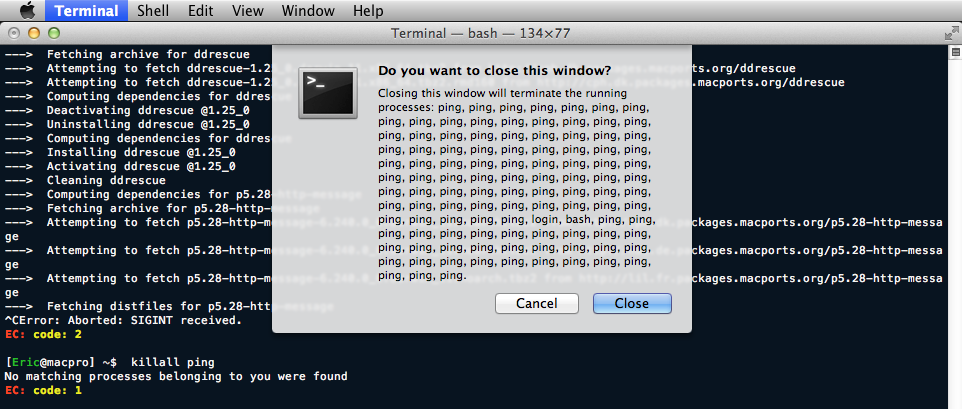

Last time it was a perl-port, so I took a screenshot of it. When you try to close the Terminal window - a LOT of pings are still active/running. And they can't be killed off. Restarting Firefox says it alrady has a version running, etc. A full restart is the only way.

I couldn't find anything when searching. U don't know if it's a bug or not, but I wanted to report it anyway. It's clearly not the intended behaviour. At least I assume that. :)

Attachments (3)

Change History (45)

Changed 4 years ago by iEFdev

| Attachment: | ping_no_internet.png added |

|---|

comment:1 Changed 4 years ago by iEFdev

| Description: | modified (diff) |

|---|

comment:2 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

| Cc: | ryandesign added |

|---|---|

| Summary: | Upgrading ports sometimes brakes internet connection → Upgrading ports sometimes breaks internet connection |

Changed 4 years ago by iEFdev

| Attachment: | LittleSnitch.png added |

|---|

comment:3 follow-up: 6 Changed 4 years ago by iEFdev

Thanks Ryan…

Replying to ryandesign:

I'm not familiar with dnscrypt-proxy and don't know whether it could be involved in the problem you're seeing.

I don't belive it is that. It's just like running any local dns, like dnsmasq, etc… Using 127.0.0.1 as the DNS and the program reach for the selected service instead.

Replying to ryandesign:

Based on the widget at the top right of the window in your screenshot, it looks like you're running OS X 10.7, ...

Yes, 10.7 it is.

Replying to ryandesign:

It may be unrelated, but ever since I set up a MacPorts build machine for 32-bit Mac OS X 10.6 in 2016 it has experienced intermittent kernel panics. Very often this occurs when building perl modules, and often the crash log shows that ping was the active process. I haven't seen the problem on our 64-bit build machines for 10.6 or later. But maybe there is a bug in ping, or a bug in the way that MacPorts calls it, that is only seen on specific computer configurations running old OS versions.

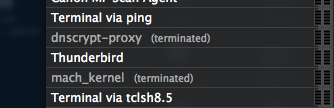

This might be something. The reason I changed the DNS was because I saw in my “Little Snitch” window that it got terminated at the same time it stopped working… but also, the mach_kernel - which maybe makes it related, since you mentioned the kernel panics.

I took a screenshot of that too, at the time:

So, perhaps it's somewhat related, since it was a perl port.

32/64… MacPorts sometimes says I'm i386, but uname says x86_64.

comment:4 follow-ups: 5 7 8 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

| Cc: | jmroot added |

|---|

Looking back at the kernel panic logs I've saved from our 10.6 i386 builder, all of them are either in ping or grep or cut.

When MacPorts determines a server's ping time, it does so by running ping -noq -c3 -t3 $host | grep round-trip | cut -d / -f 5.

The code is in proc portfetch::sortsites in https://github.com/macports/macports-base/blob/master/src/port1.0/fetch_common.tcl.

MacPorts spawns ping and grep and cut processes for each host whose pingtime it needs to check. It doesn't need to check those that it checked within the past 24 hours, but if you haven't used MacPorts in over a day, then it needs to recheck them all.

An idea about why perl modules specifically might trigger the problem is that our list of perl cpan mirror sites is very large. There are currently 191 hosts in the list. Add to that the 16 MacPorts mirrors and that's 207 hosts to check all at once, for which MacPorts spawns 621 simultaneous processes. Each process surely needs at least one file descriptor. It could be that this is exceeding the number of available file descriptors, especially if your computer has many other open files already and/or has been online for awhile and you've been running programs that have leaked file descriptors (opened them but forgot to close them). It's possible that MacPorts itself is still leaking file descriptors somewhere. One such bug was already fixed previously. And if you are running out of file descriptors, then it is not at all surprising that Internet access, the ability to open programs and read and write files, and pretty much everything else about the computer would cease to function.

Possible solutions:

- We could trim the list of perl cpan mirrors to a more reasonable number.

- MacPorts base could spawn only a

pingprocess for each host and perform the equivalent of thegrepandcutcommands using Tcl string processing. - MacPorts base could limit the number of hosts that it checks simultaneously.

comment:5 Changed 4 years ago by iEFdev

Replying to ryandesign:

Looking back at the kernel panic logs I've saved from our 10.6 i386 builder, all of them are either in

pingorgreporcut.When MacPorts determines a server's ping time, it does so by running

ping -noq -c3 -t3 $host | grep round-trip | cut -d / -f 5.

One could do the same line with awk instead – just to see if there's a difference? …if grep|cut is the problem.

ping -noq -c3 -t3 127.0.0.1 | awk -F '/' 'match($0, "round-trip") {print $5}'

MacPorts spawns

pingandgrepandcutprocesses for each host whose pingtime it needs to check. It doesn't need to check those that it checked within the past 24 hours, but if you haven't used MacPorts in over a day, then it needs to recheck them all.

Where is this recheck/update done? During the port syncing or when actually installing upgrading a port?

For me it broke was when upgrading the port it self, and not when checking for outdated ports.

An idea about why perl modules specifically might trigger the problem is that our list of perl cpan mirror sites is very large. There are currently 191 hosts in the list. Add to that the 16 MacPorts mirrors and that's 207 hosts to check all at once, for which MacPorts spawns 621 simultaneous processes. Each process surely needs at least one file descriptor.

That is lot of mirrors.

When working with mirrors… Isn't location more imporant than ping times? Thinking… If location is automated from where I am - perhaps ping times could be centralized, and updated (just like Portindex) on a weekly basis? Or what's the reason behind the 24h, except being daily updated?

Unless there are daily updates of perl ports, it will check that every time.

It could be that this is exceeding the number of available file descriptors, especially if your computer has many other open files already and/or has been online for awhile and you've been running programs that have leaked file descriptors (opened them but forgot to close them).

I usually upgrade my ports when I start the computer. When done and cleaned up, I move on to emails and the browser. The mail client is opened all the time, checking emails on a 15min interval. Since Firefox is kind of a memory-hog - I usually have to restart it once or twice every day. Other than that, I usually quit programs when done.

And if you are running out of file descriptors, then it is not at all surprising that Internet access, the ability to open programs and read and write files, and pretty much everything else about the computer would cease to function.

That describes pretty much what happens.

- MacPorts base could limit the number of hosts that it checks simultaneously.

Just a thougth… but could it be time related? I dont know how the x-man-page://ping looks like on newer versions of OS X/macOS, but can it be tweaked with other options to be more generous, timely. Like -m, -T, -t or -W.

Ie. what happens if I'm an a slow connection …which I am, if my connection can't keep up the speed with the script?

comment:6 follow-ups: 10 25 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

32/64… MacPorts sometimes says I'm i386, but

unamesays x86_64.

There is an unfortunate terminology clash with the term "arch" in MacPorts.

The MacPorts variable os.arch has one of two possible values at this time: i386 means any computer with an Intel processor regardless whether 32-bit or 64-bit and powerpc means any computer with a PowerPC processor regardless whether 32-bit or 64-bit. MacPorts prints the value of os.arch in several places in the log.

The second and completely separate meaning of "arch" in MacPorts is that it can use four possible values for -arch flags: i386 (32-bit Intel), x86_64 (64-bit Intel), ppc (32-bit PowerPC), and ppc64 (64-bit PowerPC). These values appear in -arch flags in the log and also in archive filenames.

Since you are on Lion, we know you have a 64-bit Intel processor; Lion and later require that. So I recognize that your situation is not identical to the one I mentioned on our 32-bit Snow Leopard build machine. But it had similarities that made me want to mention it anyway.

Replying to iEFdev:

One could do the same line with

awkinstead – just to see if there's a difference? …ifgrep|cutis the problem.

I don't think grep or cut specifically are the problem. I think part of the problem is we are spawning too many processes regardless what those processes are. Therefore one of my suggestions is to remove the use of all but the ping process and do the text processing in Tcl.

Where is this recheck/update done? During the port syncing or when actually installing upgrading a port?

For me it broke was when upgrading the port it self, and not when checking for outdated ports.

During the fetch phase MacPorts checks the ping times of the servers from which it might download during that phase.

When working with mirrors… Isn't location more imporant than

pingtimes?

Maybe but we do not know the locations of the servers.

Thinking… If location is automated from where I am - perhaps

pingtimes could be centralized, and updated (just like Portindex) on a weekly basis?

We update the Portindexes on our servers because the calculation should be the same for all users and by centralizing it we can save our users' CPU time.

Ping checks are not the same for all users. They depend on where in the world each user is. The point is to determine which servers have the best performance for that user so that they download files from servers that would be fastest for them. So we cannot do ping checks centrally on our servers.

There's also no point repeatedly (daily or weekly or even monthly) checking ping times for hosts that are not being used. We do have many old ports in our collection that still work but have not been updated for years. The current implementation of checking ping times right before deciding which of a set of hosts to download from seems fine.

If you are suggesting that we should use a geoip database to determine the approximate location of the user and create a central database with geoip-derived locations of all of our hosts and use that data to choose a server close to the user, then those would be major changes to how MacPorts and its infrastructure works and it could certainly be discussed elsewhere (bring it up on the macports-dev mailing list if you are strongly interested in this) but it would be a lot of work and I'm not sure the end result would be so much more accurate than our current ping time check that it would be worth the effort.

Or what's the reason behind the 24h, except being daily updated?

We don't want to check the ping times every single time we fetch; that would be overkill. So we cache the values for 24 hours. I guess Joshua thought that was a reasonable length of time when he implemented it. We don't want the values to get too stale. A user's location in the world (or a server's) might change which would invalidate the ping results. Or an Internet service provider might add additional links between their network and others which might make connectivity between the user and some servers faster. So we need to recheck ping times periodically.

Unless there are daily updates of perl ports, it will check that every time.

Not necessarily. Many of the hosts used in the perl cpan fetch group also store non-perl files and might be used by other ports, so the ping times of some of the hosts might already be known due to other non-perl port updates.

Just a thougth… but could it be time related? I dont know how the

x-man-page://pinglooks like on newer versions of OS X/macOS, but can it be tweaked with other options to be more generous, timely. Like-m,-T,-tor-W.

As shown above, we already use -t3 to limit the total time of each ping process to 3 seconds. Since we spawn all pings simultaneously, the total time taken should be no more than 3 seconds plus however long it takes the OS to spawn the processes. I don't know whether adding any other ping flags would be useful.

Ie. what happens if I'm an a slow connection …which I am, if my connection can't keep up the speed with the script?

You can read the code I linked to earlier if you like, but if MacPorts cannot determine the ping time of a host, it assigns it a fake high ping time. If your network is not up to the task of processing 207 pings in 3 seconds, then the list of ping times will have some inaccuracies, which will have the consequence that for 24 hours MacPorts might try to download files from hosts in an order which is not necessarily the fastest for you.

So far I see no evidence that this is a slow network problem. It seems more likely to me that MacPorts is overtaxing the OS by launching so many simultaneous processes and thereby using up so many file descriptors simultaneously.

comment:7 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to ryandesign:

- MacPorts base could spawn only a

pingprocess for each host and perform the equivalent of thegrepandcutcommands using Tcl string processing.

comment:8 follow-up: 9 Changed 4 years ago by jmroot (Joshua Root)

Replying to ryandesign:

Possible solutions:

- We could trim the list of perl cpan mirrors to a more reasonable number.

- MacPorts base could spawn only a

pingprocess for each host and perform the equivalent of thegrepandcutcommands using Tcl string processing.

Why not both? :-)

- MacPorts base could limit the number of hosts that it checks simultaneously.

That's not easy to do correctly and efficiently. The pings are run in parallel so that the overall time for the check is not the sum of the individual response times (which may be up to 3 seconds in case of a timeout).

comment:9 follow-up: 29 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to jmroot:

Replying to ryandesign:

Possible solutions:

- We could trim the list of perl cpan mirrors to a more reasonable number.

- MacPorts base could spawn only a

pingprocess for each host and perform the equivalent of thegrepandcutcommands using Tcl string processing.Why not both? :-)

I agree; we can work on any and all of these items.

Yet another idea might be:

- MacPorts base could have a hard upper limit of the number of hosts for which it will check ping times at a time, say 50 or 100. If there are more hosts than that such as in the case of perl, MacPorts could pick 50 or 100 hosts from the list at random (although maybe the MacPorts mirrors should always be included). Ideally any excess hosts that were not checked would be marked for immediate recheck, so that if a user upgrades several perl modules at once then for the first module maybe not all hosts were pinged but by the second or third module all of the pingtimes are known.

- MacPorts base could limit the number of hosts that it checks simultaneously.

That's not easy to do correctly and efficiently. The pings are run in parallel so that the overall time for the check is not the sum of the individual response times (which may be up to 3 seconds in case of a timeout).

I agree, that's why I'm starting with the easier task with (2).

comment:10 follow-up: 11 Changed 4 years ago by iEFdev

Thanks for all answers Ryan. 👍

Replying to ryandesign:

Replying to iEFdev:

32/64… MacPorts sometimes says I'm i386, but

unamesays x86_64.There is an unfortunate terminology clash with the term "arch" in MacPorts.

# ...

Since you are on Lion, we know you have a 64-bit Intel processor; Lion and later require that. So I recognize that your situation is not identical to the one I mentioned on our 32-bit Snow Leopard build machine. But it had similarities that made me want to mention it anyway.

Lion's a bit of a mess about the archtecture and how it's reported back. It actually shows different types with different commands, but also within uname:

$ uname -m x86_64 $ uname -p x86_64 $ uname -v ...; root:xnu-1699.32.7~1/RELEASE_X86_64 $ uname -pv ...; root:xnu-1699.32.7~1/RELEASE_X86_64 i386 $ uname -a ...; root:xnu-1699.32.7~1/RELEASE_X86_64 x86_64

I found that a bit funny. :)

…plus arch says i386, and machine says i486.

They have diff meaning and usage, but still…

I think I've read somewhere that Lion has some sort of mixed version, and that 10.8 was the first true 64-bit.

Replying to iEFdev:

One could do the same line with

awkinstead – just to see if there's a difference? …ifgrep|cutis the problem.I don't think

greporcutspecifically are the problem. I think part of the problem is we are spawning too many processes regardless what those processes are. Therefore one of my suggestions is to remove the use of all but thepingprocess and do the text processing in Tcl.# ...

So far I see no evidence that this is a slow network problem. It seems more likely to me that MacPorts is overtaxing the OS by launching so many simultaneous processes and thereby using up so many file descriptors simultaneously.

Yes, and that was part of the idea by using awk. The number of processes would be cut by a 3'rd. I saw the changeset now, and that will basically do the same thing… (ie. ping + all in one take). That change alone will probably solve the problem, but to cut down the number of mirrors as well sounds great.

If you are suggesting that we should use a geoip database to determine the approximate location of the user and create a central database with geoip-derived locations of all of our hosts and use that data to choose a server close to the user, then those would be major changes to how MacPorts and its infrastructure works and it could certainly be discussed elsewhere (bring it up on the macports-dev mailing list if you are strongly interested in this) but it would be a lot of work and I'm not sure the end result would be so much more accurate than our current ping time check that it would be worth the effort.

Well, that would be a giant implementation just for this - so, unless there are some real benefits for other parts as well, it sounds a bit too much. I see your point.

comment:11 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

I think I've read somewhere that Lion has some sort of mixed version, and that 10.8 was the first true 64-bit.

In Lion and Snow Leopard, some 64-bit computers still boot with a 32-bit kernel (as all computers did in Leopard and earlier) but can run 64-bit programs. In Mountain Lion and later, all computers boot with the 64-bit kernel.

comment:12 Changed 4 years ago by iEFdev

It happened again today. Another perl port, so I'd guess it's safe to safe those are the problem. Restarted and used the file from pr177, but it didn't solve it, so trimming the list perhaps (ie. solution: 1).

comment:13 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

It's bound to happen more frequently for you temporarily because our 10.7 buildbot worker is offline, so while you used to receive binaries for most perl modules, now that we're not building them your machine is having to build them itself and it pinging all those hosts beforehand. I hope to get that server back online soon.

comment:14 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

comment:15 follow-ups: 16 20 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

I haven't seen the kernel panic on the 32-bit Snow Leopard buildbot worker in a couple weeks, but I applied this patch to its copy of MacPorts anyway.

comment:16 Changed 4 years ago by iEFdev

Replying to ryandesign:

It's bound to happen more frequently for you temporarily because our 10.7 buildbot worker is offline, so while you used to receive binaries for most perl modules, now that we're not building them your machine is having to build them itself and it pinging all those hosts beforehand. I hope to get that server back online soon.

Aah, I see… Thank for the info. 👍

Replying to ryandesign:

I haven't seen the kernel panic on the 32-bit Snow Leopard buildbot worker in a couple weeks, but I applied this patch to its copy of MacPorts anyway.

Great! I added it here (manually) in my install + I trimmed down the list quite a bit.

Speaking of the list. Except from trimming it, I think it needs an update. For example…

- the PHP mirrors are gone, aren't they? All redirects to www.php.net now, and there's no mirror.php page anymore.

- For debian, they also have their default one: http://ftp.debian.org/debian/pool/main/ (ie without a country code in it).

- I also saw a dead link. There's no unix section there anymore: http://ftp.sunet.se/pub/

So, assuming there are a few other dead links… Could that affect the ping process to potentially causing the break?

And, most mirrors use https now, don't they? Same example: http://ftp.sunet.se/pub/ -> https://ftp.sunet.se/pub/ When/if trimming the list – https sites should be prioritized. Incase some of them uses redirects (->https) it could also make it faster.

comment:17 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Please file separate tickets for the issues with the mirror list so that this ticket can stay focused on the ping issue.

Offline hosts should not break the ping process; it is designed to accommodate that.

comment:18 Changed 4 years ago by kencu (Ken)

all the https links will likely fail on older systems about 10.9 and less unless users have followed my bootstrap procedure.

comment:19 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Not necessarily. But separate ticket, please.

comment:20 follow-up: 21 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to ryandesign:

I haven't seen the kernel panic on the 32-bit Snow Leopard buildbot worker in a couple weeks, but I applied this patch to its copy of MacPorts anyway.

Saying that turned out to be a great way to cause a kernel panic to happen. :) At least I assume that's what happened. No panic log was generated.

comment:21 follow-up: 22 Changed 4 years ago by iEFdev

Replying to ryandesign:

Saying that turned out to be a great way to cause a kernel panic to happen. :) At least I assume that's what happened. No panic log was generated.

I guess it needs a good trim then? (ie option 1)

I'm sitting and updading the mirror_sites.tcl at the moment… Just did the cpan. Through their list of mirrirs, and just picking the http(s) sites as before, there are ≈ 30 fewer now.

I saw they also have a status page, with misc reports/probes - like “hangs”. I've removed few of the bad ones.

comment:22 follow-up: 23 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

I guess it needs a good trim then? (ie option 1)

Having MacPorts ping fewer servers at once seems like it would help. I don't know what the best way to do that is though. Manually trimming the list in mirror_sites.tcl is one option but leaves open the question about how to decide which ones to remove, how many to remove, and how to ensure this any any other lists of sites doesn't grow too big in the future. That's why I favor a solution in MacPorts base, whether that's to check only a handful of servers each times MacPorts fetches a port's files until after a few ports all servers have been checked (seems doable), or limit the number of servers pinged at a time but check them all before fetching the first port's files (seems difficult, as Josh said, and could make the user wait longer than they expected for all the results to come in).

comment:23 follow-up: 24 Changed 4 years ago by iEFdev

Replying to ryandesign:

Replying to iEFdev:

I guess it needs a good trim then? (ie option 1)

Having MacPorts ping fewer servers at once seems like it would help. I don't know what the best way to do that is though. Manually trimming the list in mirror_sites.tcl is one option but leaves open the question about how to decide which ones to remove, how many to remove, and how to ensure this any any other lists of sites doesn't grow too big in the future.

When I updated the list now, I din't trim anything - and as I wrote in the commit msg… leaving that for you. I only left out a few ones that looked bad on the status page.

I guess one could set up rules/guidelines, like max 10/country, 20/continent, or something like that - and use the status page to make sure the srv looks good and doesn't have freq errors. Or something like that. That would leave it at some 80-100, maybe.

That's why I favor a solution in MacPorts base, whether that's to check only a handful of servers each times MacPorts fetches a port's files until after a few ports all servers have been checked (seems doable), or limit the number of servers pinged at a time but check them all before fetching the first port's files (seems difficult, as Josh said, and could make the user wait longer than they expected for all the results to come in).

That would be great.

One thing I noticed yesterday, not only cpan, is that some srv have really long response times that well exceed the 3 sec.

comment:24 follow-up: 26 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

some srv have really long response times that well exceed the 3 sec.

Are you talking about the ping response time or the http/https/ftp response time? MacPorts only checks the ping response time and aborts the ping check if the response takes > 3 seconds.

comment:25 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to ryandesign:

It seems more likely to me that MacPorts is overtaxing the OS by launching so many simultaneous processes and thereby using up so many file descriptors simultaneously.

It's possible that a different problem we see on our Snow Leopard x86_64 buildbot worker could also be caused by a lack of file descriptors; see #59691. So if we could find out (perhaps with some additional logging as suggested in that ticket) whether we are just gradually running out of file descriptors, and figure out how to fix that, we could solve two problems at once.

comment:26 follow-up: 27 Changed 4 years ago by iEFdev

Replying to ryandesign:

Replying to iEFdev:

some srv have really long response times that well exceed the 3 sec.

Are you talking about the ping response time or the http/https/ftp response time? MacPorts only checks the ping response time and aborts the ping check if the response takes > 3 seconds.

Yes, that's prob the http/ftp, from when I went through the list(s) - when I updated the mirror_sites.tcl for the PR.

comment:27 follow-up: 28 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

Yes, that's prob the http/ftp, from when I went through the list(s) - when I updated the

mirror_sites.tclfor the PR.

Ok well it would be normal for some servers to have slow download speeds for you since not all servers are close to you. That's why we ping them: to hopefully find those that will have the fastest speed for you wherever you are in the world.

If there are servers with slow download speeds but also low ping times such that MacPorts picks those servers instead of other servers that have faster download speeds, then that would be more relevant. It could be caused by a problem with that server or with the network connections between you and them. But there's not much we can do about that either. If there is a server that is delivering poor performance for everybody and the server administrators cannot resolve it then we could remove it from the list.

comment:28 Changed 4 years ago by iEFdev

Replying to ryandesign:

If there are servers with slow download speeds but also low ping times such that MacPorts picks those servers instead of other servers that have faster download speeds, then that would be more relevant. It could be caused by a problem with that server or with the network connections between you and them. But there's not much we can do about that either. If there is a server that is delivering poor performance for everybody and the server administrators cannot resolve it then we could remove it from the list.

Yes, that'd be great. All I did now (in the PR) was, except updating the mirrors by official lists, if there was one… Checking for http-status. When not ok - I visited all manually just to see if they're were alive or not. It took some time, but I belive it was worth it. For cpan, I removed a few that looked “bad/unstable” in their status page. Else than that - it's just an updated list …including the removal of the old php mirrors (as discussed in #60558).

comment:29 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to ryandesign:

- MacPorts base could have a hard upper limit of the number of hosts for which it will check ping times at a time, say 50 or 100. If there are more hosts than that such as in the case of perl, MacPorts could pick 50 or 100 hosts from the list at random (although maybe the MacPorts mirrors should always be included). Ideally any excess hosts that were not checked would be marked for immediate recheck, so that if a user upgrades several perl modules at once then for the first module maybe not all hosts were pinged but by the second or third module all of the pingtimes are known.

comment:30 follow-up: 31 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Eric, it would be great if you could try my PR and let me know if that fixes the problem for you. If not, you could try reducing max_hosts_to_ping in my PR from 50 to 30 or something. If would also be good to know if you see any other problems as a result of using my PR.

comment:31 Changed 4 years ago by iEFdev

Replying to ryandesign:

Eric, it would be great if you could try my PR and let me know if that fixes the problem for you. If not, you could try reducing max_hosts_to_ping in my PR from 50 to 30 or something. If would also be good to know if you see any other problems as a result of using my PR.

Yes, I will. I've replaced the file locally, and installed a perl package I didn't already have. It chose a macport mirror, so maybe it didn't go through the mirror lists?

Installing the same port from source picked another mirror:

$ sudo port -s install p5.30-www-curl ---> Computing dependencies for p5.30-www-curl ---> Fetching distfiles for p5.30-www-curl ---> Attempting to fetch WWW-Curl-4.17.tar.gz from http://cpan.perl.pt/modules/by-module/WWW ---> Verifying checksums for p5.30-www-curl // ... //

Failed to build though, but that's another thing.

I did an earlier update to that file from another change you did. Think it was merged and I assume it's in 2.6.3 - which I've updated to. (PR:177 <(comment:14))

It has been better lately, and I've been able to update my perl ports without any hickups. Thought it maybe was that change from earlier.

With the new change (PR:199)… Don't know what to expect, but it felt like it was much faster now. Didn't take long to pick a mirror, so I guess it works much better(/faster) – and I know how huge that mirror list is for perl.

Changed 4 years ago by iEFdev

comment:32 Changed 4 years ago by iEFdev

Attached the file **/macports/pingtimes if that is to any help. It's from after that port -s install …. I think it look a bit “lighter” than usual. Hope that is a good sign.

comment:33 follow-up: 34 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Yes, the previous PR that used only ping and no longer used cut and grep was included in 2.6.3.

The new PR limits MacPorts to pinging 50 sites at a time. So first MacPorts figures out which sites are candidates to use for the port you're installing (including our official mirrors), then figures out which of those have not been pinged in the past 24 hours, and if there are more than 50, it pings 50 of them at random, rather than pinging all of them (potentially hundreds, as we previously discussed) as it used to. This might result in the server that is actually closest to you not being used on that installation if it wasn't among the 50 random pings. If you then install or upgrade another port, that could lead to up to another 50 hosts being pinged, potentially uncovering a server closer than was found in the first 50. And so on.

comment:34 follow-up: 35 Changed 4 years ago by iEFdev

Replying to ryandesign:

This might result in the server that is actually closest to you not being used on that installation if it wasn't among the 50 random pings. …

That exaplains the portuguese server (.pt) it chose. And that it worked. 👍

Replying to ryandesign:

…, you could try reducing max_hosts_to_ping in my PR from 50 to 30 or something.

set max_hosts_to_ping 50

Would it be possible to change this value later in the conf-files? Like a setting. If not, maybe that would be a good setting to have available for others later on?

Just a thought…

comment:35 follow-up: 36 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

Replying to iEFdev:

Would it be possible to change this value later in the conf-files?

Not with the way I wrote it in that PR. It could conceivably be changed to make that possible.

comment:36 Changed 4 years ago by iEFdev

Replying to ryandesign:

Not with the way I wrote it in that PR. It could conceivably be changed to make that possible.

If this is the last part of the fix, and the tipping point is whether it fails on 30|50|NN… I guess as a preference option it would be a little bit “future-proof”, sortof… (ie avoiding more changes), wouldn't it?

I've cleaned up the last parts in the mirror_sites.tcl now (PR:7257). So if/when that gets merged, I guess we can close this one – since it seems to work now.*

* I installed another perl port today and it picked a mirror fast, and without any hickups

comment:37 Changed 4 years ago by iEFdev

Don't know if #17738 if (half-)related or not, but when this is fixed I guess that one isn't relevant anymore.

comment:38 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

I'm not sure why you think that.

comment:39 Changed 4 years ago by Eric F <3958664+iefdev@…>

comment:40 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

| Owner: | set to ryandesign |

|---|---|

| Resolution: | → fixed |

| Status: | new → closed |

comment:41 Changed 4 years ago by breun (Nils Breunese)

| Cc: | breun added |

|---|

comment:42 Changed 4 years ago by ryandesign (Ryan Carsten Schmidt)

So far three users have reported crashes due to this same issue on Big Sur. That's being tracked at #61683.

Certainly it's not intended behavior for MacPorts to affect your Internet connection. I've never heard of that happening before and can't imagine how it would be possible. Of course, if you've used MacPorts to install software that is designed to interact with your Internet connection, it's imaginable that it could be misconfigured or broken in a way that might affect your Internet connection.

I'm not familiar with dnscrypt-proxy and don't know whether it could be involved in the problem you're seeing.

MacPorts does use ping to determine which servers are closest to you to hopefully improve download speeds.

Based on the widget at the top right of the window in your screenshot, it looks like you're running OS X 10.7, 10.8 or 10.9, which are of course old by now. Maybe upgrading your OS, if that's possible on your hardware, would help alleviate this problem.

It may be unrelated, but ever since I set up a MacPorts build machine for 32-bit Mac OS X 10.6 in 2016 it has experienced intermittent kernel panics. Very often this occurs when building perl modules, and often the crash log shows that ping was the active process. I haven't seen the problem on our 64-bit build machines for 10.6 or later. But maybe there is a bug in ping, or a bug in the way that MacPorts calls it, that is only seen on specific computer configurations running old OS versions.